Latest Posts

How To Create A Self-Signed SSL Certificate Using Bash And OpenSSL

By Cyber Defence on June 8, 2026

How To Debug Bash Scripts Using bash -x And set Commands

By Cyber Defence on June 8, 2026

How To Use Cron Jobs With Bash Scripts For Automation

By Cyber Defence on June 8, 2026

How To Use Pipes In Bash Scripts For Command Chaining

By Cyber Defence on June 8, 2026

Trending Posts

Email to Profile: Social Media Search and Free Lookup Tools

By 0xSnow on November 3, 2025

Advanced Free Email Lookup and Reverse Search Techniques

By 0xSnow on November 3, 2025

How to Use Pentest Copilot in Kali Linux

By 0xSnow on November 1, 2025

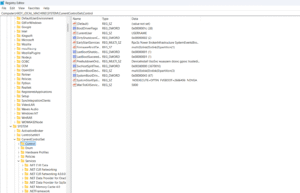

How to Use the Windows Registry to optimize and control your PC.

By Tamilselvan S on October 30, 2025

MQTT Security: Securing IoT Communications

By 0xSnow on October 30, 2025

Why Generative AI is the New Standard for Video Restoration in 2026

By 0xSnow on June 2, 2026

Bash Case Statement: How To Match Patterns In Shell Scripts

By Cyber Defence on June 3, 2026

How To Use Bash For Loop With Practical Examples

By Cyber Defence on June 3, 2026

How To Compare Strings In Bash Scripts

By Cyber Defence on June 3, 2026

Bash While Loop Explained With Examples

By Cyber Defence on June 3, 2026

Latest News

How EDR Killers Bypass Security Tools

By 0xSnow on March 19, 2026

Fake VPN Download Trap Can Steal Your Work Login in Minutes

By 0xSnow on March 18, 2026

This Android Bug Can Crack Your Lock Screen in 60 Seconds

By 0xSnow on March 14, 2026

Microsoft Authenticator Flaw Could Leak Login Codes

By 0xSnow on March 13, 2026

BlackSanta Malware A Stealthy Threat Targeting Recruiters and HR Teams

By 0xSnow on March 12, 2026

Microsoft Unveils “Project Helix”- A Next-Gen Xbox Merging Console and PC Gaming

By 0xSnow on March 6, 2026

Free Email Lookup Tools and Reverse Email Search Resources

By 0xSnow on March 6, 2026

How AI Puts Data Security at Risk

By 0xSnow on December 13, 2025

The Evolution of Cloud Technology: Where We Started and Where We’re Headed

By 0xSnow on December 9, 2025

.jpg)

The Evolution of Online Finance Tools In a Tech-Driven World

By 0xSnow on December 9, 2025