Netdata is distributed, real-time, performance and health monitoring for systems and applications. It is a highly optimized monitoring agent you install on all your systems and containers.

Netdata provides unparalleled insights, in real-time, of everything happening on the systems it runs (including web servers, databases, applications), using highly interactive web dashboards.

It can run autonomously, without any third party components, or it can be integrated to existing monitoring tool chains (Prometheus, Graphite, OpenTSDB, Kafka, Grafana, etc).

Netdata is fast and efficient, designed to permanently run on all systems (physical & virtual servers, containers, IoT devices), without disrupting their core function.

Netdata is free, open-source software and it currently runs on Linux, FreeBSD, and MacOS.

Netdata is in the Cloud Native Computing Foundation (CNCF) landscape and it is the 3rd most starred open-source project. Check the CNCF TOC Netdata presentation.

People get addicted to netdata. Once you use it on your systems, there is no going back! You have been warned..

Also Read – ISF : Industrial Control System Exploitation Framework

How it looks

The following animated image, shows the top part of a typical netdata dashboard.

A typical netdata dashboard, in 1:1 timing. Charts can be panned by dragging them, zoomed in/out with SHIFT + mouse wheel, an area can be selected for zoom-in with SHIFT + mouse selection.

Netdata is highly interactive and real-time, optimized to get the work done!

Few online demos to experience it Live

User base

Netdata is used by hundreds of thousands of users all over the world. Check our GitHub watchers list. You will find people working for Amazon, Atos, Baidu, Cisco Systems, Citrix, Deutsche Telekom, DigitalOcean, Elastic, EPAM Systems, Ericsson, Google, Groupon, Hortonworks, HP, Huawei, IBM, Microsoft, NewRelic, Nvidia, Red Hat, SAP, Selectel, TicketMaster, Vimeo, and many more!

Registry

When you install multiple netdata, they are integrated into one distributed application, via a netdata registry. This is a web browser feature and it allows us to count the number of unique users and unique netdata servers installed.

Quick Start

You can quickly install netdata on a Linux box (physical, virtual, container, IoT) with the following command:

Make sure you run bash for your shell

bash

Install netdata, directly from github sources

bash <(curl -Ss https://my-netdata.io/kickstart.sh)

The above command will:

- install any required packages on your system (it will ask you to confirm before doing so),

- compile it, install it and start it

To try netdata in a docker container, run this:

docker run -d –name=netdata \

-p 19999:19999 \

-v /proc:/host/proc:ro \

-v /sys:/host/sys:ro \

-v /var/run/docker.sock:/var/run/docker.sock:ro \

–cap-add SYS_PTRACE \

–security-opt apparmor=unconfined \

netdata/netdata

Why Netdata?

Netdata has a quite different approach to monitoring.

Netdata is a monitoring agent you install on all your systems. It is:

- a metrics collector – for system and application metrics (including web servers, databases, containers, etc)

- a time-series database – all stored in memory (does not touch the disks while it runs)

- a metrics visualizer – super fast, interactive, modern, optimized for anomaly detection

- an alarms notification engine – an advanced watchdog for detecting performance and availability issues

All the above, are packaged together in a very flexible, extremely modular, distributed application.

This is how netdata compares to other monitoring solutions:

| Netdata | Others (open-source and commercial) |

| High resolution metrics (1s granularity) | Low resolution metrics (10s granularity at best) |

| Monitors everything, thousands of metrics per node | Monitor just a few metrics |

| UI is super fast, optimized for anomaly detection | UI is good for just an abstract view |

| Meaningful presentation, to help you understand the metrics | You have to know the metrics before you start |

| Install and get results immediately | Long preparation is required to get any useful results |

| Use it for troubleshooting performance problems | Use them to get statistics of past performance |

| Kills the console for tracing performance issues | The console is always required for troubleshooting |

| Requires zero dedicated resources | Require large dedicated resources |

Netdata is open-source, free, super fast, very easy, completely open, extremely efficient, flexible and integrate-able.

It has been designed by SysAdmins, DevOps and Developers for troubleshooting performance problems, not just visualize metrics.

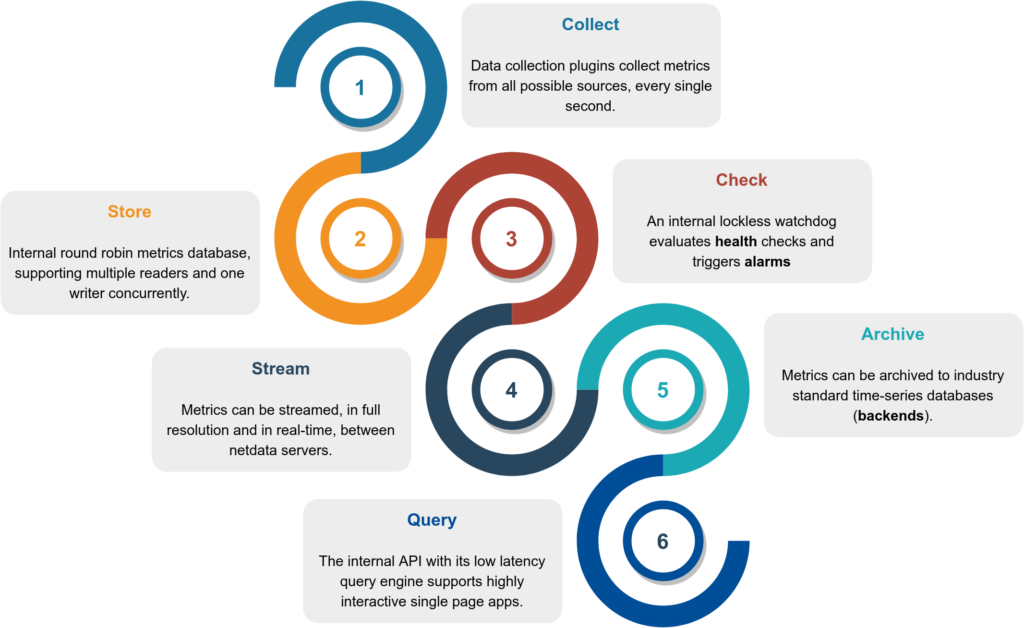

How it works

Netdata is a highly efficient, highly modular, metrics management engine. Its lockless design makes it ideal for concurrent operations on the metrics.

This is how it works:

- Collect : Multiple independent data collection workers are collecting metrics from their sources using the optimal protocol for each application and push the metrics to the database. Each data collection worker has lockless write access to the metrics it collects.

- Store : Metrics are stored in RAM in a round robin database (ring buffer), using a custom made floating point number for minimal footprint.

- Check : A lockless independent watchdog is evaluating health checks on the collected metrics, triggers alarms, maintains a health transaction log and dispatches alarm notifications.

- Stream : An lockless independent worker is streaming metrics, in full detail and in real-time, to remote netdata servers, as soon as they are collected.

- Archive : A lockless independent worker is down-sampling the metrics and pushes them to backend time-series databases.

- Query : Multiple independent workers are attached to the internal web server, servicing API requests, including data queries.

The result is a highly efficient, low latency system, supporting multiple readers and one writer on each metric.

Infographic

This is a high level overview of netdata feature set and architecture.

Click it to to interact with it (it has direct links to documentation).

Features

This is what you should expect from Netdata:

General

- 1s granularity – the highest possible resolution for all metrics.

- Unlimited metrics – collects all the available metrics, the more the better.

- 1% CPU utilization of a single core – it is super fast, unbelievably optimized.

- A few MB of RAM – by default it uses 25MB RAM. You size it.

- Zero disk I/O – while it runs, it does not load or save anything (except

errorandaccesslogs). - Zero configuration – auto-detects everything, it can collect up to 10000 metrics per server out of the box.

- Zero maintenance – You just run it, it does the rest.

- Zero dependencies – it is even its own web server, for its static web files and its web API (though its plugins may require additional libraries, depending on the applications monitored).

- Scales to infinity – you can install it on all your servers, containers, VMs and IoTs. Metrics are not centralized by default, so there is no limit.

- Several operating modes – Autonomous host monitoring (the default), headless data collector, forwarding proxy, store and forward proxy, central multi-host monitoring, in all possible configurations. Each node may have different metrics retention policy and run with or without health monitoring.

Health Monitoring & Alarms

- Sophisticated alerting : comes with hundreds of alarms, out of the box! Supports dynamic thresholds, hysteresis, alarm templates, multiple role-based notification methods.

- Notifications : alerta.io, amazon sns, discordapp.com, email, flock.com, irc, kavenegar.com, messagebird.com, pagerduty.com, prowl, pushbullet.com, pushover.net, rocket.chat, slack.com, smstools3, syslog, telegram.org, twilio.com, web and custom notifications.

Integrations

- time-series dbs – can archive its metrics to graphite, opentsdb, prometheus, json document DBs, in the same or lower resolution (lower: to prevent it from congesting these servers due to the amount of data collected).

Visualization

- Stunning interactive dashboards – mouse, touchpad and touch-screen friendly in 2 themes: slate (dark) and white.

- Amazingly fast visualization – responds to all queries in less than 1 ms per metric, even on low-end hardware.

- Visual anomaly detection – the dashboards are optimized for detecting anomalies visually.

- Embeddable – its charts can be embedded on your web pages, wikis and blogs. You can even use Atlassian’s Confluence as a monitoring dashboard.

- Customizable – custom dashboards can be built using simple HTML (no javascript necessary).

Positive and negative values

To improve clarity on charts, netdata dashboards present positive values for metrics representing read, input, inbound, received and negative values for metrics representing write, output, outbound, sent.

Netdata charts showing the bandwidth and packets of a network interface. received is positive and sent is negative.

Autoscaled y-axis

Netdata charts automatically zoom vertically, to visualize the variation of each metric within the visible time-frame.

A zero based stacked chart, automatically switches to an auto-scaled area chart when a single dimension is selected.

Charts are synchronized

Charts on netdata dashboards are synchronized to each other. There is no master chart. Any chart can be panned or zoomed at any time, and all other charts will follow.

Charts are panned by dragging them with the mouse. Charts can be zoomed in/out with SHIFT + mouse wheel while the mouse pointer is over a chart.

The visible time-frame (pan and zoom) is propagated from netdata server to netdata server, when navigating via the my-netdata menu.

Highlighted time-frame

To improve visual anomaly detection across charts, the user can highlight a time-frame (by pressing ALT + mouse selection) on all charts.

A highlighted time-frame can be given by pressing ALT + mouse selection on any chart. Netdata will highlight the same range on all charts.

Highlighted ranges are propagated from netdata server to netdata server, when navigating via the my-netdata menu.

What does it monitor

Netdata data collection is extensible – you can monitor anything you can get a metric for. Its Plugin API supports all programing languages (anything can be a netdata

plugin, BASH, python, perl, node.js, java, Go, ruby, etc).

- For better performance, most system related plugins (cpu, memory, disks, filesystems, networking, etc) have been written in C.

- For faster development and easier contributions, most application related plugins (databases, web servers, etc) have been written in python.

APM (Application Performance Monitoring)

- statsd – netdata is a fully featured statsd server.

- Go expvar – collects metrics exposed by applications written in the Go programming language using the expvar package.

- Spring Boot – monitors running Java Spring Boot applications that expose their metrics with the use of the Spring Boot Actuator included in Spring Boot library.

- uWSGI – collects performance metrics from uWSGI applications.

System Resources

- CPU Utilization – total and per core CPU usage.

- Interrupts – total and per core CPU interrupts.

- SoftIRQs – total and per core SoftIRQs.

- SoftNet – total and per core SoftIRQs related to network activity.

- CPU Throttling – collects per core CPU throttling.

- CPU Frequency – collects the current CPU frequency.

- CPU Idle – collects the time spent per processor state.

- IdleJitter – measures CPU latency.

- Entropy – random numbers pool, using in cryptography.

- Interprocess Communication – IPC – such as semaphores and semaphores arrays.

Memory

- ram – collects info about RAM usage.

- swap – collects info about swap memory usage.

- available memory – collects the amount of RAM available for userspace processes.

- committed memory – collects the amount of RAM committed to userspace processes.

- Page Faults – collects the system page faults (major and minor).

- writeback memory – collects the system dirty memory and writeback activity.

- huge pages – collects the amount of RAM used for huge pages.

- KSM – collects info about Kernel Same Merging (memory dedupper).

- Numa – collects Numa info on systems that support it.

- slab – collects info about the Linux kernel memory usage.

Disks

- block devices – per disk: I/O, operations, backlog, utilization, space, etc.

- BCACHE – detailed performance of SSD caching devices.

- DiskSpace – monitors disk space usage.

- mdstat – software RAID.

- hddtemp – disk temperatures.

- smartd – disk S.M.A.R.T. values.

- device mapper – naming disks.

- Veritas Volume Manager – naming disks.

- megacli – adapter, physical drives and battery stats.

- adaptec_raid – logical and physical devices health metrics.

Filesystems

- BTRFS – detailed disk space allocation and usage.

- Ceph – OSD usage, Pool usage, number of objects, etc.

- NFS file servers and clients – NFS v2, v3, v4: I/O, cache, read ahead, RPC calls

- Samba – performance metrics of Samba SMB2 file sharing.

- ZFS – detailed performance and resource usage.

Networking

- Network Stack – everything about the networking stack (both IPv4 and IPv6 for all protocols: TCP, UDP, SCTP, UDPLite, ICMP, Multicast, Broadcast, etc), and all network interfaces (per interface: bandwidth, packets, errors, drops).

- Netfilter – everything about the netfilter connection tracker.

- SynProxy – collects performance data about the linux SYNPROXY (DDoS).

- NFacct – collects accounting data from iptables.

- Network QoS – the only tool that visualizes network tc classes in real-time

- FPing – to measure latency and packet loss between any number of hosts.

- ISC dhcpd – pools utilization, leases, etc.

- AP – collects Linux access point performance data (hostapd).

- SNMP – SNMP devices can be monitored too (although you will need to configure these).

- port_check – checks TCP ports for availability and response time.

Virtual Private Networks

- OpenVPN – collects status per tunnel.

- LibreSwan – collects metrics per IPSEC tunnel.

- Tor – collects Tor traffic statistics.

Processes

- System Processes – running, blocked, forks, active.

- Applications – by grouping the process tree and reporting CPU, memory, disk reads, disk writes, swap, threads, pipes, sockets – per process group.

- systemd – monitors systemd services using CGROUPS.

Users

- Users and User Groups resource usage – by summarizing the process tree per user and group, reporting: CPU, memory, disk reads, disk writes, swap, threads, pipes, sockets

- logind – collects sessions, users and seats connected.

Containers and VMs

- Containers – collects resource usage for all kinds of containers, using CGROUPS (systemd-nspawn, lxc, lxd, docker, kubernetes, etc).

- libvirt VMs – collects resource usage for all kinds of VMs, using CGROUPS.

- dockerd – collects docker health metrics.

Web Servers

- Apache and lighttpd – mod-status (v2.2, v2.4) and cache log statistics, for multiple servers.

- IPFS – bandwidth, peers.

- LiteSpeed – reads the litespeed rtreport files to collect metrics.

- Nginx – stub-status, for multiple servers.

- Nginx+ – connects to multiple nginx_plus servers (local or remote) to collect real-time performance metrics.

- PHP-FPM – multiple instances, each reporting connections, requests, performance, etc.

- Tomcat – accesses, threads, free memory, volume, etc.

- web server access.log files – extracting in real-time, web server and proxy performance metrics and applying several health checks, etc.

- HTTP check – checks one or more web servers for HTTP status code and returned content.

Proxies, Balancers, Accelerators

- HAproxy – bandwidth, sessions, backends, etc.

- Squid – multiple servers, each showing: clients bandwidth and requests, servers bandwidth and requests.

- Traefik – connects to multiple traefik instances (local or remote) to collect API metrics (response status code, response time, average response time and server

- uptime).

- Varnish – threads, sessions, hits, objects, backends, etc.

- IPVS – collects metrics from the Linux IPVS load balance

Database Servers

- CouchDB – reads/writes, request methods, status codes, tasks, replication, per-db, etc.

- MemCached – multiple servers, each showing: bandwidth, connections, items, etc.

- MongoDB – operations, clients, transactions, cursors, connections, asserts, locks, etc.

- MySQL and mariadb – multiple servers, each showing: bandwidth, queries/s, handlers, locks, issues, tmp operations, connections, binlog metrics, threads, innodb

- PostgreSQL – multiple servers, each showing: per database statistics (connections, tuples read – written – returned, transactions, locks), backend processes,

- indexes, tables, write ahead, background writer and more.

- Proxy SQL – collects Proxy SQL backend and frontend performance metrics.

- Redis – multiple servers, each showing: operations, hit rate, memory, keys, clients, slaves.

- RethinkDB – connects to multiple rethinkdb servers (local or remote) to collect real-time metrics.

Message Brokers

- beanstalkd – global and per tube monitoring.

- RabbitMQ – performance and health metrics.

Search and Indexing

- ElasticSearch – search and index performance, latency, timings, cluster statistics, threads statistics, etc.

DNS Servers

- bind_rndc – parses named.stats dump file to collect real-time performance metrics. All versions of bind after 9.6 are supported.

- dnsdist – performance and health metrics.

- ISC Bind (named) – multiple servers, each showing: clients, requests, queries, updates, failures and several per view metrics. All versions of bind after 9.9.10

- are supported.

- NSD – queries, zones, protocols, query types, transfers, etc.

- PowerDNS – queries, answers, cache, latency, etc.

- unbound – performance and resource usage metrics.

- dns_query_time – DNS query time statistics

Time Servers

- chrony – uses the chronyc command to collect chrony statistics (Frequency, Last offset, RMS offset, Residual freq, Root delay, Root dispersion, Skew, System time).

- ntpd – connects to multiple ntpd servers (local or remote) to provide statistics of system variables and optional also peer variables.

Mail Servers

- Dovecot – POP3/IMAP servers.

- Exim – message queue (emails queued).

- Postfix – message queue (entries, size).

Hardware Sensors

- IPMI – enterprise hardware sensors and events.

- lm-sensors – temperature, voltage, fans, power, humidity, etc.

- Nvidia – collects information for Nvidia GPUs.

- RPi – Raspberry Pi temperature sensors.

- w1sensor – collects data from connected 1-Wire sensors.

UPSes

- apcupsd – load, charge, battery voltage, temperature, utility metrics, output metrics

- NUT – load, charge, battery voltage, temperature, utility metrics, output metrics

- Linux Power Supply – collects metrics reported by power supply drivers on Linux.

Social Sharing Servers

- RetroShare – connects to multiple retroshare servers (local or remote) to collect real-time performance metrics.

Security

- Fail2Ban – monitors the fail2ban log file to check all bans for all active jails.

Authentication, Authorization, Accounting (AAA, RADIUS, LDAP) Servers

- FreeRadius – uses the radclient command to provide freeradius statistics (authentication, accounting, proxy-authentication, proxy-accounting).

Telephony Servers

- opensips – connects to an opensips server (localhost only) to collect real-time performance metrics.

Household Appliances

- SMA webbox – connects to multiple remote SMA webboxes to collect real-time performance metrics of the photovoltaic (solar) power generation.

- Fronius – connects to multiple remote Fronius Symo servers to collect real-time performance metrics of the photovoltaic (solar) power generation.

- StiebelEltron – collects the temperatures and other metrics from your Stiebel Eltron heating system using their Internet Service Gateway (ISG web).

Game Servers

- SpigotMC – monitors Spigot Minecraft server ticks per second and number of online players using the Minecraft remote console.

Distributed Computing

- BOINC – monitors task states for local and remote BOINC client software using the remote GUI RPC interface. Also provides alarms for a handful of error conditions.

Media Streaming Servers

- IceCast – collects the number of listeners for active sources.

Monitoring Systems

- Monit – collects metrics about monit targets (filesystems, applications, networks).

Provisioning Systems

- Puppet – connects to multiple Puppet Server and Puppet DB instances (local or remote) to collect real-time status metrics.

You can easily extend Netdata, by writing plugins that collect data from any source, using any computer language.